Foundations of Success: Digital Experience Monitoring

We’ve all seen the rapid evolution of the workplace; the introduction of personal consumer devices, the massive explosion of SaaS providers, and the gradual blurring of the lines of responsibility for IT have introduced new complications to a role that once had very clearly defined purview. In a previous post, we discussed quantification of user experience as a key metric for success in IT, and, in turn, we introduced a key piece of workspace analytics: Digital Experience Monitoring (DEM). This raises the question, though, what exactly is DEM about?

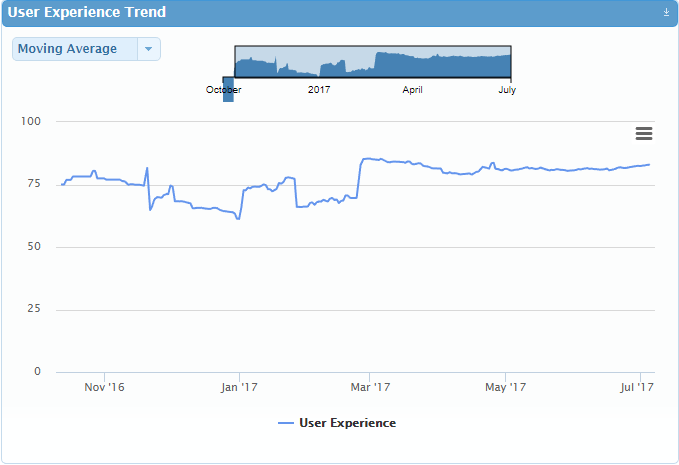

At its very heart, DEM is a method of understanding end users’ computing experience and how well IT is enabling them to be productive. This begins with establishing a concept of a user experience score as an underlying KPI for IT. With this score, it’s possible to proactively spot degradation as it occurs, and – perhaps even more importantly – it introduces a method for IT to quantifiably track its impact on the business. With this as a mechanism of accountability, the results of changes and new strategies can be trended and monitored as a benchmark for success.

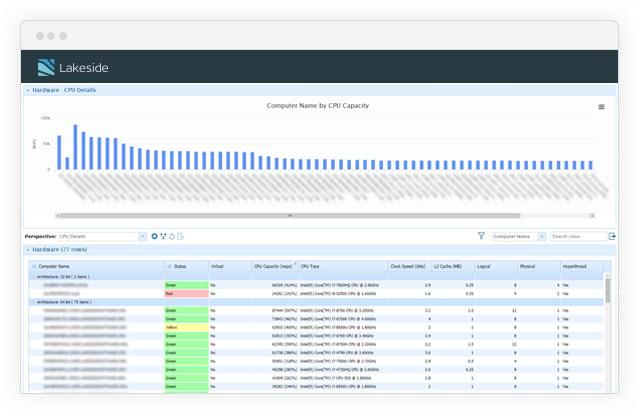

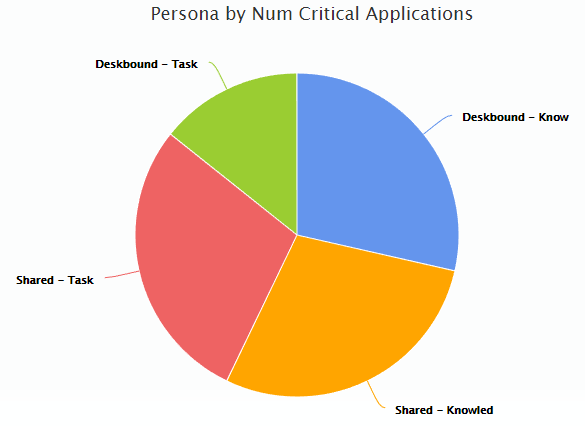

That measurable success criterion is then a baseline for comparison that threads its way through every aspect of DEM. It also provides a more informed basis for key analytical components that stem from observation of real user behavior, like continuous segmentation of users into personas. By starting with an analysis of how well the current computing environment meets the needs of users, it opens the door to exploring each aspect of their usage: application behaviors, mobility requirements, system resource consumption, and so on. From there users can be assigned into Gartner defined workstyles and roles, creating a mapping of what behaviors can be expected for certain types of users. This leads to more data driven procurement practices, easier budget rationalization, and overall a more successful and satisfied user base.

Taking an active example from a sample analysis, there are only a handful of critical applications per persona. Those applications represent what users spend most of their productive time working on, and therefore have a much larger business impact. Discovery and planning around these critical applications also can dictate how to best provision resources for net new employees that may have a similar job function. This prioritization of business-critical applications based on usage means that proactive management becomes much more clear cut. The experience on systems where users are most active can be focused on with automated analysis and resolution of problems, and this will have the maximum overall impact on improving user experience. In fact, that user experience can then be trended over time to show what the real business impact is of IT problem solving:

Various voices within Lakeside will go through pieces of workspace analytics over the coming months, and we’ll be starting with a more in-depth discussion of DEM. This will touch on several aspects of monitoring and managing the Digital Experience of a user, including the definition of Personas, management of SLAs and IT service quality measurements, and budget rationalization. Throughout, we’ll be exploring the role of IT as an enabler of business productivity, and how the concept of a single user experience score can provide an organization a level of insight into their business-critical resources that wouldn’t otherwise be possible.

Subscribe to the Lakeside Newsletter

Receive platform tips, release updates, news and more